Throughout development, there are plenty of occasions where we might wonder, ‘What do players understand or think about my game?’. Surveys are one part of your toolkit to explore these topics. A survey is a list of questions that you ask people after they play your game, whether it’s an early build, a demo, or at the end of development, and they can be a powerful tool to inform game design decisions. Here, we’ll look at how to use surveys, some traps to avoid, and how to write useful questions to help make games better.

DATA IMPROVES DESIGN DECISIONS

Game development is an iterative process. From the first prototype, we are assessing ‘Is this fun?’, ‘Do players understand?’, ‘Should I develop this idea further?’, and using that information to decide what to do next. Should we drop this mechanic? Should we do more of this? Is there any emergent behaviour from players we want to encourage?

That iteration starts with a game designer’s experience and expertise. But it’s supported by player behaviour. Seeing what players really do, or really think about your game, is rich information that we can use to inspire design decisions. Whether it’s reflecting on the quality of a vertical slice, comparing levels, or working out when retention drops, surveys can particularly help when you want to measure something. Typical questions that can be answered with a survey include: ‘What rating do players give this puzzle?’; ‘What emotional response does this get?’, and, ‘When does this game get dull?’.

Google Forms is ideal for most surveys, and handily, it’s free to use.

Surveys are only one of the methods available to help inform design decisions. They’re most appropriate when you want to measure things, like a player’s opinions or perceptions of difficulty. They work particularly well when combined with other playtest methods, such as observing players’ natural behaviour to identify usability issues, or interviewing them to understand ‘why’ we see a certain behaviour.

A typical study design for a playtest involves:

• Watching players play through a section of your game

• Asking them questions immediately after to interpret the behaviour we saw

• Asking them to complete a survey, to get ratings we can use to benchmark later

There are other times, however, when you may only have capacity to run a survey – such as getting feedback from playtesters remotely – which is fine, as long as you know what type of information you can or can’t reliably get from a survey.

WHAT DO YOU WANT TO LEARN?

Jumping straight into writing your questions will likely result in a vague, unfocused survey. We want our surveys to be direct, punchy, and relevant to our game development priorities, so we’ll start by confirming what we need to know. Spending time on this massively increases the chance you’ll generate useful answers.

First, consider what your team needs to know. This can be informed by what they’re currently working on, the elements of the game they’re most uncertain about, or risks they’ve identified, and should be linked to the team’s current priorities. Some example research objectives could include:

• How do players decide which games to buy?

• Are any of our characters overpowered?

• Do players believe this puzzle is of the appropriate difficulty?

• Why do players keep getting lost in the tutorial?

• Why do we see increased churn on day ten?

Not all of these are suitable for a survey. Before continuing, think about whether you can answer a particular research question with a survey. Some, like, ‘Do players think this attack is overpowered?’, can be answered with a survey because it’s a measurement question. Others, such as, ‘What bits of the game do players not understand?’ requires closer observation of player behaviour, and would be better answered by another method.

Ask players to complete a survey after every level, or at the end of the playtest, before they forget their opinions!

It’s essential that you decide the research objectives before you confirm the method (whether it’s a survey, a usability test, an interview, etc.). Leading with the method first will lead to unreliable or unhelpful conclusions. If you’ve gathered some research objectives that can be answered by a survey, it’s time to write some questions.

WRITE GOOD QUESTIONS

For each of our research objectives, we want to ask one or more questions to reveal the answer. A good question meets the following criteria:

• The player understands what is being asked

• The player knows the answer

• The player is able to give their answer

To understand what’s being asked requires you to use the same words as the player. Be careful with acronyms, or industry terms (like MOBA)

that might not be familiar to the player. You can also get caught out when referring to characters or enemy types by name if the player doesn’t know which you mean. Showing a picture can help with this.

The player also needs to know the answer. This is easiest when asking about things they’ve done or thought about recently. Asking, ‘How easy or hard was that level?’ makes sense immediately after playing a level, but becomes harder to accurately answer the longer play goes on for, and the more they forget. Be careful asking about future behaviour (‘Will you buy this game?’), because even with the best intentions, people are terrible at anticipating their future behaviour, and the data you get back will be junk.

Lastly, a player has to be able to give their answer. This requires us to decide how to allow people to respond. Open text fields (where players can type anything) are unrestrictive, so allow players to give a complete answer. But they also take longer to analyse, because they generate a lot of raw text data. They’re best when we couldn’t possibly anticipate what answers players will give in advance. For example, ‘What was the best thing about the game so far?’.

Selecting one or more options from a list of checkboxes or radio buttons is easier for the participant, but this requires you to comprehensively know everything that the player might want to respond. It’s best when there are a closed, defined list of options. For example, ‘Which was your preferred character?’.

A third common question type is scales – asking players to rate something. This can be quality ratings (rate this from 0–10, or very good to very bad), or ratings about their impressions of things like difficulty (rate this puzzle from very hard to very easy). Scale questions are particularly useful when you intend to compare the results – for example, comparing how a game’s quality changes throughout development, or comparing the difficulty of different levels.

Graphing the numerical data will reveal whether the differences are statistically significant.

To decide on a question’s text, start with your research objective. Then decide what question you’d need to ask a player to answer that objective. The research objective ‘Is this puzzle too hard?’ could be answered with a question ‘How easy or hard was this puzzle?’. Then decide the appropriate question format, whether it’s a scale, multiple choice, or open text field to answer. In this case, I’d recommend a scale that covers ‘much too hard’, ‘too hard’, ‘just right’, ‘too easy’, and ‘much too easy’.

Question types can then be combined – so asking players to rate a level on a 0–10 scale, asking them to rate the difficulty of it on a scale going from ‘very hard’ to ‘very easy’, and then providing an open text box to let them explain why. For collecting the data, there are lots of expensive survey tools available, with various advanced features to allow branching logic or more extreme question types. Most of the time, these extra features introduce the risk of technical errors, or confusing users. Google Forms is free, and works fine for most typical playtest surveys.

UNDERSTANDING WHAT YOU’VE LEARNED

You’ll get different types of data back from your survey. Some will be numerical data (‘What score did you give this level?’). Other questions will have text responses (‘Why did you give that score?’). We’ll treat each of these differently.

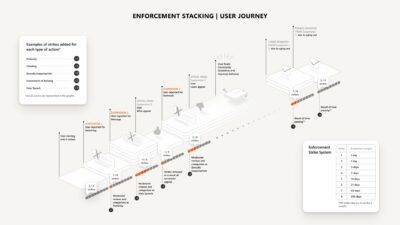

Numerical data is the simplest to interpret. It’s typical to represent the spread of the data – start by taking the average, and then look at the variation in responses. Did most people respond similarly, or are there big differences in the responses? Graphing your data, and comparing the confidence intervals, will allow you to visualise the range (Google ‘Quantitative Research for new games user researchers’ for a template and more information on how to do this).

You can then think about what all this means. Either by comparing the responses to different questions (‘Did players think this level was harder than the previous one?’), or by looking at a single question’s response, and interpreting what it’s telling you (‘Did players rate this level easy or hard?’). From this numerical data, we can both take measurements for the things we ask, and draw comparisons between different parts of a game.

Theme the text responses to reveal which are most common.

Text data needs a different process to interpret. For each question, we need to theme the responses. One way to do this is to pull all the nuggets out of the answers you receive and write them onto Post-it notes, then group those notes to reveal the themes. For example, splitting a long text answer into its components – ‘I didn’t like this level because it was hard to see which way to go, the boss was too hard, and the special move was overpowered’ – means multiple points can be split and combined with other people’s responses to create themes.

By doing this for each question, and combining scores with the themes generated by free-text answers, you can start to understand not just what score players gave, but why they gave that score – a good steer for game design decisions. Having gathered some results, you then need to spend some time thinking about what they mean. Which of the results were most surprising? Did anything rate particularly well, or particularly poorly? Working with the rest of the game team to identify which conclusions are interesting, and what action the team should take, will help make the process of running a survey worthwhile. Reviewing your research objectives will help achieve this.

AVOIDING TRAPS

The most common mistake I see teams make is asking the wrong people. For convenience, teams recruit people close to hand – either friends and family, other game devs, or their super-fans. This then generates unreliable conclusions – the standards and opinions of the people surveyed won’t match those that your real players would say, which can easily cause you to make your game too difficult for most players.

Getting the right players is vital for testing; if you’re making a VR game, then you’ll need to find players who enjoy helmet-y experiences.

One way to overcome this is to explore incentives – what can we offer (money, in-game rewards, another developer’s game) that will convince more ‘typical’ people to take part in the survey? Other traps for surveys include:

• Writing double-barrelled questions that ask multiple things in a single question (‘Did you think this level was too long and too dull?’). How would a player who thought it was just too dull, but not too long, answer?

• Asking leading questions (‘Would you agree this puzzle was too easy?’). This will push people towards saying it was too easy.

• Not allowing players to say ‘I don’t have an opinion’ or ‘I don’t know’ by forcing answers to every question.

GETTING START

Surveys are hard to get right – much harder than many other games user research methods. When speaking to players in live playtests, it’s obvious when they haven’t understood a question, and there are many opportunities to notice when you’re talking about different things or haven’t been understood. This is harder to notice with a survey, and so we have to continually be mindful that players might not have understood what we meant. Piloting the survey (sitting with the first participant and asking them what each question means) is one way to reveal where misunderstandings might arise, and allow you to reword the question before it goes to more people. Survey writing takes practice and continual iteration to master.

To learn more about games user research methods, join the community at gamesuserresearch.com, and sign up for free monthly playtest lessons or deep-dive into research methods with training courses.